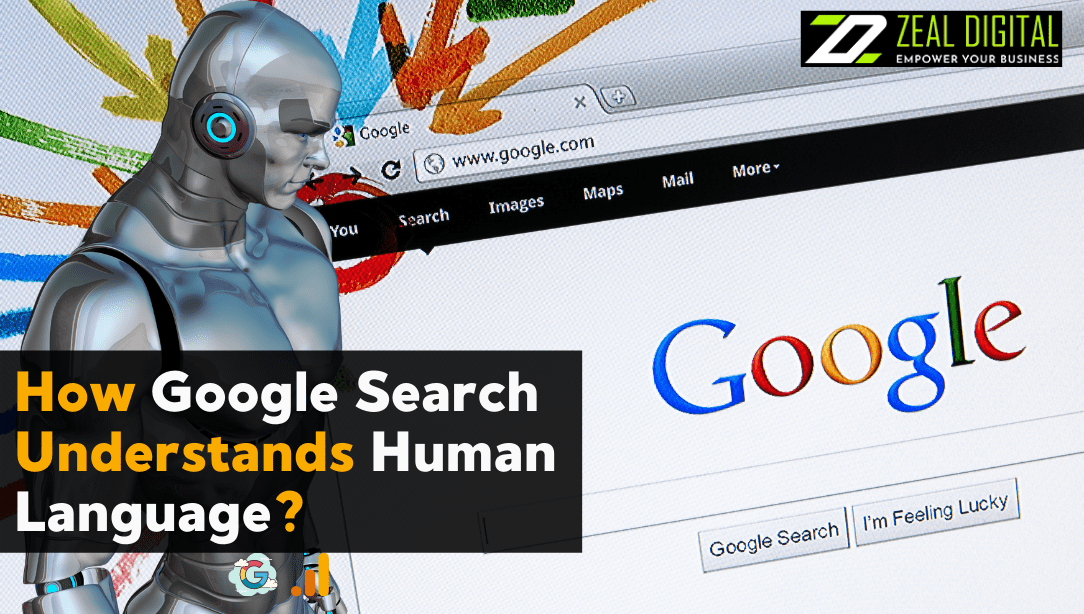

Wondering how Google translates your search queries? A lot of work goes into providing the correct results, and one of the most important is the interpretation of the language. Search engines understand human language better than ever before due to advanced intelligent design (AI) and machine learning.

Google explains how its AI translates human language and delivers relevant results.

Over the years, Google has developed hundreds of algorithms, like their original format, to help provide the right results. Their algorithms and systems are discarded when they create new AI machines. Instead, Search relies on hundreds of algorithms and machine learning systems and can only grow when old and new machines work well together.

Each algorithm by type has its purpose, and it works at different times and in various ways to help deliver the best results. Some of the most potent have more power than others. Let’s look at some of the most popular AI systems used in Search today and what they do.

Pandu introduces us to four types of AI that help Google understand human language; MUM, BERT, Neural Matching, and RankBrain. These types of AI work together to fit your questions and relevant answers online. These AI models were developed at different times. This proves Google constantly develops new technologies to improve users’ experience.

The following types of AI play a crucial role in how Google Search understands human language to deliver quick results:

1. RankBrain

RankBrain is a method that Google uses to better understand the question users want to use. It was released in 2015 and was made public on October 26, 2015

Let’s start with the first kind of AI Google created. If you have been trading online for some time, you can see that the search engine has changed in the last seven years. Built in 2015, RankBrain changed the way Google displays results. This type of AI can trawl the vast internet and give you the right solution in seconds.

It may be the oldest form of AI, but it still plays a vital role in Google’s performance. RankBrain can understand the terms by searching for and linking to specific answers. For example, when you ask the question, “Who is the jungle king? RankBrain understands that it is the king you are referring to. Thanks to these AI systems, Google will provide answers related to “who the jungle king”.

2. Neural Similarity

Most recent AI machines rely on neural networks. But it wasn’t until 2018 that Google added neural comparisons to Search, allowing it to better understand how to search and page link. Neural parallel tools in interpreting and comparing more obscure representations in questions and pages. It focuses on the entire query or page rather than just the search terms, making it easier to understand the content. Search engines’ rules will be implemented accordingly with SEO services in Sydney.

For instance, If a friend asks you this, “methods how to create a brown,” you might be surprised. But with neural metaphors, we can distinguish. Looking at the main manifestations of the ideas in question — management, leadership, personality and more — neural metaphors can explain that the researcher is looking for leadership techniques based on a popular, ethical guide.

3. BERT

An example of understanding BERT is storytelling. BERT, launched in 2019, has been an essential component of understanding natural languages, allowing users to know how a combination of multiple words have different meanings and purposes. BERT understands how a group of words conveys a complex idea rather than just exploring what corresponds to a single word. BERT recognises words by how they communicate with each other, ensuring that the keywords in your question are not lost on the results — no matter how small.

For example, if you’re researching “can you buy a drug for someone else,” BERT understands that you’re trying to determine if you can get someone else’s medication. Before BERT, we considered the short term, mainly to share the results of your self-treatment.

BERT is now an integral part of every English question. The BERT system manages to sort and retrieve two essential functions in delivering the correct results. BERT quickly classifies texts as necessary based on their understanding of the language.

4. MUM

MUM is the latest invention from Google and stands for Multitasking Unified Model. MUM is a BERT advertisement. It can understand language, but it can also generate it.

MUM has been trained in more than 75 languages. It is remarkable and can perform multiple tasks at once. It also has a great understanding of language and graphics. However, it is still in its early stages.

Conclusion

With RankBrain’s ability to link search terms to fundamental ideas, Neural Similarity’s great understanding of different things, BERT’s understanding of search queries when used differently and MUM’s understanding of several languages, Google can understand, provide and classify information related to your Search.

If you want to be the best on the Google search results pages, you need to partner with a top digital organisation in Sydney that understands how these types of AI work.